Compute! Magazine

Back to reminiscing! I’m dedicating this part in the series to this magazine, because I think it was that good. Compute! was published from 1979 to 1994. Though it started out focusing exclusively on computers that used the MOS 6502 CPU, or some variant, like the Apple II, Atari 8-bits (400, 800, XL and XE series), and Commodore 8-bit computers (the PET, Vic-20, and C-64), it made a real effort to cover a variety of computer platforms. They added “support,” I’ll call it, (regularly featured articles and type-in programs) to the Radio Shack Color Computer (a Motorola 6800 8-bit model), and the Texas Instruments TI-99/4A (with the TMS9900 16-bit CPU) early on. Later they added the Atari ST, and the Commodore Amiga, which were 16-bit Motorola 68000 models. Last, they added the IBM PC to their roster.

They occasionally had some news items on the Apple Macintosh, but they didn’t cover it much, and never published type-in programs for it.

In Compute!‘s early days they covered a bunch of kit computers. Kit computers came as a collection of parts (boards, chips; possibly coming with a case, keyboard, disk drive(s), etc.) that adventurous consumers could buy. Using supplied instructions, solder, and a soldering iron, they literally built the machine themselves. Some were simple, meant to be educational, showing how a computer worked. Others were full-fledged computer systems. These machines dropped off the radar of the magazine by the time I discovered it in 1983. Eventually the Commodore PET and the Color Computer were dropped from it as well.

Appeal

As you can see, the style of the magazine was welcoming. I’d even call it “pedestrian.” It didn’t come off as an unapproachable technical magazine, but rather a magazine for “Home, Educational, and Recreational Computing.” As a teen it drew me in. The general format within matched this style for the most part. The articles and supplemental sections that were included in each issue guided you step by step on what you needed to do to enter the programs it contained, and use them. The overall feel of the magazine was “user friendly.”

It was literally everywhere, too. I’d often see it in the grocery store magazine aisle. Other computer magazines like Byte and Creative Computing were there, too. My grandmother got me gift subscriptions to Compute! out of the Publishers Clearing House sweepstakes whenever she’d enter.

Type-ins

One of the things I thought was great about the magazine was its type-in programs. The internet existed then, but most people didn’t have access to it. If people were plugged into a network it was a proprietary one, like CompuServe, and it was expensive. People paid for connection time by the hour, and in some cases by the minute. Flat-rate pricing for this kind of access didn’t exist then. So software was distributed on diskettes (floppy disks), or ROM cartridges. In most cases the source code for the software didn’t come with it. You were just expected to use it.

Compute! published the full source code to programs in their magazine. They published utilities, occasionally applications, and plenty of games. If you wanted to use the program you had to type it in first. They eventually added “disk” editions of their magazine, for a premium, which contained the most prominent programs they published, ready to run.

They kept things simple. They published programs in BASIC or machine code. It was rare if they published code in other languages. In many cases they intermixed machine code with BASIC, to achieve optimal speed for something. Machine code was encoded either in bytes in a string (they had notation in the listings to show you how to enter them) to make the code relocatable, or as decimal numbers in DATA statements in BASIC which were POKE’d into fixed addresses. Later they added MLX, a utility that allowed you to type a complete machine code program in decimal without having to write a BASIC program to create the machine code.

Some background

Every computer back then, with the possible exception of the Mac, came with a programming language, usually some form of BASIC. The operating system was almost invisible on most 8-bit computers. More often than not the BASIC language was your user interface, even if you wanted to do something like list the files you had on disk. There were OS-level APIs, but they were minimal, and you had to use machine code to access them. Sometimes the BASIC language you used contained built-in commands that would do what you wanted, adding a layer of abstraction. In many cases you had to POKE values into specific memory locations to get a desired result, like create a “text window” of a specific size on the screen that would word wrap, or custom configure a screen for certain graphics characteristics. On some models you couldn’t create sound without doing this. Compared to today things were extremely primitive.

Windowing systems for 8-bits like GEOS, and Microsoft Windows 1.0 didn’t come along until later. People by and large used these computers in “modes.” There were one or more text modes, and a couple or several graphics modes. It was possible to mix text and graphics. Depending on what you wanted to do, in which mode, you might have it easy, or you might have to jump through a lot of hoops to make it work in terms of programming the computer to do it.

One thing was for sure. If speed was your top priority you had no choice but to program in machine code (just typing in numbers for opcodes and data) or assembly language. Most commercial software for 8-bit computers was programmed this way.

For their time, these machines were rather advanced. They had graphics and sound capabilities that some of the more powerful machines of the day didn’t have, because the people who made them didn’t think that sort of thing was important. The majority of earlier computer platforms were harder to use, and less user friendly.

Compute!’s evolution

In the early days Compute! didn’t publish too much machine code. They mostly published articles in BASIC, because they wanted something that was easy to learn, and could be understood by mere mortals. In the early editions of the magazine they explained the programs to you.

Later on they began to add machine code. Usually it was in little dribs and drabs. Most of the program would be written in BASIC, so not much of the educational value was lost.

As time passed, the accompanying articles got less educational. This didn’t matter too much to me. I could get an idea of what was going on by going through the BASIC code, and learn some new ideas about programming.

For some reason they never got into assembly language. They could’ve gotten the speed of machine code and written something educational at the same time. I think they compromised. It seemed like their main principle was they always wanted to publish programs in the language they knew came with the machine, so that the reader didn’t have to go out and buy another language or an assembler in order to enter their programs and get them to run.

As time passed, they tended more and more to publish programs that were written entirely, or almost entirely in machine code–in decimal or hexadecimal, depending on the platform. They had a short program you could type in and use called MLX that allowed you to type in the numbers in sequence, save it as a binary file, and then run it. This was a real retrogression. Professional programmers used to program in machine code in a manner similar to this as a matter of course in the 1950s. It took all of the educational value out of it, unless you wanted to buy a disassembler and read the code after entering it.

I suspect this happened because more readers were buying the “disk” edition of the magazine, so it made no difference to the reader what language the program was in, unless they were interested in actually learning programming. Maybe Compute! came to see itself as a cheap way for people to get software without having to work for it.

In 1988 their flagship Compute! magazine quit publishing type-ins entirely. I remember I was heartbroken.

I had a subscription to the magazine for years, and getting each new issue was the highlight of each month for me. I read it cover to cover. I would often find at least a few programs I liked in each one, and I would eventually get around to typing them in. The magazine did a fairly good job of giving you enough of a description of the program so you could decide whether it was worth the effort. Often they included screenshots, so you could see what it should look like when you were done. It was always a joy to see it run.

Bugs

When I first discovered the magazine I learned quite a bit about debugging. You would type a line in, the BASIC editor would accept it (it was syntactically valid), but it was logically wrong. I had to figure out what went wrong. That was part of the process at first.

Eventually Compute! added checksums to their type-ins, and they gave you a short program called “Automatic Proofreader” that you could type in, and it would checksum the lines you typed for any program. You would enter the line into the editor, you would get the checksum, and you could compare it to the checksum they had by the same line in the published program. If they didn’t match, you knew you did something wrong. What made life interesting was their checksum program didn’t always help you. The Atari 8-bit version (the kind I used sometimes) wasn’t sophisticated enough to check for transposed characters. So it was still possible to type something wrong and not know until you ran it.

You had to be wary or patient with type-ins. Every month the magazine published code errata in a column called “Capute!” (pronounced “kah-PUT”).

Even though I looked forward to each issue, and would pick out what I wanted, I was often behind. Typing in those programs took a good amount of time, a couple hours at least. I was busy writing my own software sometimes, and I had a life outside of this stuff. So I’d usually get the corrections to something they’d published before I typed it in. It was kind of maddening trying to keep track of this. I literally had a list I updated regularly of programs I wanted to enter, what issue they were in, along with the issues for the corresponding errata. It would’ve been nicer if they had tested their stuff more thoroughly before publishing it, but maybe that was expecting too much.

Compute!’s amazing editors

Programmers who wanted to get their stuff published sent submissions to Compute!‘s editors. Usually it was for a single platform, like the Atari 800, or the Commodore 64. If their editors liked the program they 1) paid the author, and 2) they usually ported the original program to a bunch of different platforms. I never figured out how they did this. If they got a program that was originally written for the C-64, for example, they would port it to some or all of the following: the Apple II, the Atari 8-bit, the TI-99/4A (sometimes), the Atari ST, the Commodore Amiga, and/or the IBM PC. They usually published these versions in the same issue, so it was easy to compare them side by side. And with rare exception they did this every issue! Amazing, particularly since each platform was totally incompatible with the other, and they implemented things like graphics and sound totally differently. The editors didn’t try to make each version an exact clone of the original, either. They tried to keep the overall theme the same, but each would display the editor’s own style. About the only time a program would port easily was if it was just an algorithmic exercise, and its input and output was all text. That was rare.

Compute!’s franchise

The publisher did not just produce Compute!. They had a more popular magazine called Compute!’s Gazette that focused exclusively on the Commodore computer line. It was the same format as the flagship magazine, articles and type-in programs. Later they added platform-exclusive magazines for the Apple II, Atari ST, the Commodore Amiga, and the IBM PC & PCjr (the PCjr was a consumer model IBM produced for a brief time–the magazine was also short-lived), again following the same format. The platform-specific magazines continued publishing type-ins for at least a few years after the flagship Compute! stopped.

Compute! changed its format to focus exclusively on the needs of computer users in 1988. It continued on this path for about another 6 years, when it finally went under.

News & Reviews

Another thing I always looked forward to was Compute!‘s coverage of what was going on at the trade shows: the Consumer Electronics Show (CES), and Comdex. They covered them every year. I think I came upon Compute! at just the right time for this. The period from 1983-1985 was a very exciting time for personal computers. As I think I mentioned before in another post, everybody and his brother was coming out with a machine, though the bubble burst on this party within a year or two.

Reading about these events, and their reviews of newly released computers, I got a sense that I was watching history in the making. In hindsight I’d say I was right.

1984 saw the release of the first Apple Macintosh. Compute! covered the unveiling, describing the event to a tee, but printed no pictures of the event or the computer. For a bit there I didn’t even know what it looked like. For years I remembered some of the description of the event from this article. I was gratified to finally catch some video of it in Robert X. Cringely’s “Triumph of the Nerds” documentary, which was broadcast in 1996.

1985 saw the release of the Atari ST and the Commodore Amiga. My mind was blown by what I was reading about them. It was so exciting. These computers blew the doors off of what came before. Only the Macintosh could compare.

The best of Compute!

Since I’ve talked a lot about why the magazine was so great, I figured I should show you their best stuff. Here are what were, in my opinion, the magazine’s best type-in programs. I wanted to do more video, but I’ve had to struggle to show this much. So most of the depictions below are still images of them.

(Update 9/25/08: I’ve managed to add a few more videos below, replacing the still shots I had, and I added one more old favorite.)

“Superchase”, by Anthony Godshall, on Atari 8-bit, October 1982, p. 66

This game was a thriller way back when. You played a treasure hunter, going through caves, leaving tracks as you went. A monster is chasing after you. You try to get all of the treasure before the monster catches up to you. The thrilling part is the maze generating algorithm sometimes forces you to backtrack and hide out in hopes that the monster will go right past you so you can escape behind it. The monster follows your tracks, but my guess is it doesn’t necessarily follow the direction of the tracks. If it sees tracks going in two different directions it looks like it makes a random guess about which way to go. You see this a bit in the video.

There was supposed to be a trick you could use to slip past the monster if it was coming right at you. The article said if you “shook the joystick” back and forth real fast you could get the monster to accelerate, and you could breeze right by it. I tried that at the end of the video, but no joy…

“Closeout!”, by L. L. Beh, on Atari 8-bit, March 1983, p. 70

Even though it doesn’t look like it, the game had the feel of Pac Man. The gist was you’re a shopper in a store, picking up “sale items” (the dots on each level) in aisles, and baddies were chasing after you. You have a gun, and so do the baddies. What makes it interesting is the bad guys each had guns of different range, so you could get away from one at a short distance, but not from the others. The bad guys had decent AI, too. Fun game.

“Caves of Ice”, by Marvin Bunker and Robert Tsuk, on Atari 8-bit,

September 1983, p. 50

As best I can remember this was the only 3D game they published. It was based on “QuintiMaze”, by Robert Tsuk, written for the Apple II. QuintiMaze was published in Byte Magazine. Bunker and Tsuk collaborated on this version. The goal was to escape from a 5x5x5 3D maze. The maze was randomly generated each time you played. There were no bad guys you had to watch out for, or time limits. It kept track of how much time it took you to escape.

Maze games and maze-generating algorithms were popular around this time in Compute!. A bunch of games of this type had been published before this, all 2D of course.

“Worm of Bemer”, by Stephen D. Fultz, on Atari 8-bit, April 1984, p. 74

I liked this game because it had the kind of polish that I typically saw in commercial games at this time. The goal was to eat mushrooms and escape each room. Each time you’d eat a mushroom you’d grow longer. The catch was you were always moving forward, no stopping. You had to be careful not to trap yourself. The game also made it difficult to escape, making the exits very narrow. I don’t know if this was done deliberately or not, but the response time from when you moved the joystick in a direction to the time when it actually responded was slow. You had to literally think one or two moves ahead, or else you’d screw up.

“Acrobat”, by Peter Rizzuto, on Atari 8-bit, February 1985, p. 56

The point of this game was to dodge obstacles in all sorts of ways. You had to jump, flip, and slide to get over and under stuff coming at you. It was a sideways scroller. It was unique because it had a moving background to convey motion, and the action with the obstacles got complicated. It was a “thinking” action game. You had to think fast on your feet.

“SpeedScript”, by Charles Brannon, on Atari 8-bit

(Image from Wikipedia.org)

SpeedScript was originally written for Commodore 8-bit computers by Charles Brannon, and published in the January 1984 issue of Compute!’s Gazette (see the link). It was later ported to other platforms.

They also published “SpeedCalc,” a spreadsheet program.

Years later I got SpeedScript off of a BBS. I wasn’t crazy enough to type in the whole program. It was long. When I got my own 8-bit computer I bought a commercial word processor along with it. I found SpeedScript quite handy for viewing text files, though. It had a small footprint on disk, and was quick to load.

You can find the following issues here.

Commodore 64 version, March 1985, p. 124

Commodore VIC-20 version, April 1985, p. 100

Atari 8-bit version, May 1985, p. 103

Apple II version, June 1985, p. 116

“Biker Dave”, by David Schwener, on Atari XL/XE (8-bits),

November 1986, p. 38

The action in this game reminded me of some coin-op video games I saw at the time, though this was nowhere near coin-op quality. You’re a stunt biker trying to jump a bunch of cars. You had to get your bike up to the right speed to make the jump, or else you’d crash. You also had to make sure you didn’t accelerate too quickly, or else you’d wipe out before you made the jump. Each time you successfully made the jump, another car was added, so you had to increase your speed to the jump each time. Neat game!

“Laser Chess”, by Mike Duppong, June 1987, p. 25

Atari XL/XE translation by Rhett Anderson, Assistant Editor

The above video is my meager attempt at a demo, playing both sides. It’s not that interesting in terms of strategy (I barely had one for each side), but it shows some of the action. I didn’t play a complete game, because one side’s laser got blasted. I figured after that the end was inevitable, and not very interesting.

I referred to this game in another post on learning Squeak, since Stephan Wessels had published a tutorial whose end product looked similar to it.

Laser Chess was one of the few programs Compute! published that had a lasting legacy. It was originally published in a 1987 edition of Compute!’s Atari ST Disk & Magazine. Mike Duppong had won a $5,000 programming contest put on by the magazine, with this game. He originally wrote it in Modula-2. It was adapted by Compute!’s editors for the Amiga, Commodore 64, Apple II, and the Atari XL/XE, using BASIC and machine code.

Update 12-21-2013: There used to be online versions of this game, but I haven’t found them recently. Other versions of it are out there, if you look for it.

I could see that there was something special about this game, but it was not one that I got into much. I was never that good at playing chess to begin with. Not to say that chess strategy was necessary here, but rather it evoked its own strategy, and it was complicated in its own way. In regular chess you strategize based on the position of pieces and how they can move. Here, you strategize mostly by position and orientation of pieces, though it’s possible to capture pieces by just moving yours on an opponent piece’s square, as in regular chess. The difference here is each player gets two moves per turn, and every piece can only move one space in a lateral direction (forward, back, or sideways) per move. You could move one space diagonally by taking a shortcut that combined a vertical and sideways move, taking up a whole turn. You could also rotate a piece, which counted as one move. This added another dimension, because most pieces have a reflective surface. The reason being that each player has a laser. If you positioned your pieces in the right orientation at the right time you could blast an opponent’s piece off the board! Firing the laser counted as one move. Each side could only fire their laser once per turn.

You had to be careful setting up your laser shots. Your opponent could use your pieces’ reflective surfaces against you. You could also accidentally kill one of your own pieces if you didn’t carefully consider where the laser beam went.

Each side also had a “hypercube” piece. When you moved it onto another piece’s square it randomly placed that piece on an open space on the board. I didn’t use it in the above video. I think it was the hollow square piece.

Edit 5-13-2012: I couldn’t resist this. Here’s a hilarious scene from “The Big Bang Theory” TV show where the gang plays “secret agent laser obstacle chess.” 😀 It’s from Season 2, Episode 18, called, “The Work Song Nanocluster.”

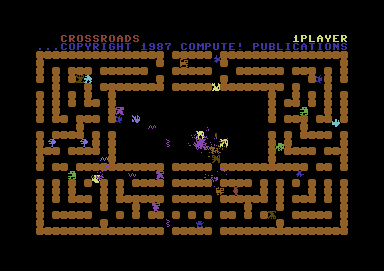

“Crossroads”, by Steve Harter, on the Commodore 64,

Compute!’s Gazette, December 1987

This game was published exclusively in Compute!’s Gazette. I only know about it from a friend who used to use Commodore computers. This game is one of the greats. It’s still remembered by the people who played it.

I’ve played it a bit. You’re a guy trying to pick up “shields” (they look a bit like swirling Japanese flying stars), and meanwhile beasties are trying to chase after you and eat you. Picking up shields makes you more resistant to attack. You have to pick up a certain number to go on to the next level. The baddies can pick up the shields as well, and gain strength from them. You have to kill them to get their shields.

What was unique was that the beasties would go after each other as well (that’s the mayhem you see in the screenshot above). Sometimes you could provoke them to do this, to distract them. It was a pretty involved game. I would’ve liked to have posted a video of this, but no matter what I tried it didn’t turn out well.

“Screen Print,” by Richard Tietjens, April 1988, p. 64 – This was a utility (so there’s no picture for it), but I thought it really deserved a mention. Screen Print was one of the last programs they published for the Atari 8-bit. It was one of the most useful utilities they ever published, in my opinion. I could literally give it any Atari graphics file format, it would decode it properly, and display it on the screen. If I wanted it to, it would also print out a nice copy on my dot matrix printer.

Something was curious about it though. As I typed it in I noticed a couple of code sections labeled “Poker” in the comments. At the time I had no idea what it stood for.

I had gotten my own Atari 8-bit computer and a modem around this time (I explain this in Part 1), and I began to explore BBSes. Somewhere along the line I found that people had uploaded graphics images from the computer game Strip Poker. Duh! I was a teenage boy. Of course I downloaded them! I tried looking at them with a simple bitmap viewer I had written, and all I got was garbage. They were encoded. Made sense. They didn’t want people peeking. You had to win some hands in the game to see the naughtier pictures. It occurred to me one day, remembering back to when I typed in “Screen Print,” “Hmm. There were those sections called ‘Poker’. I wonder…” I loaded it up, tried it out, and sure enough it loaded those Strip Poker images just fine. 😉 I wonder if the editors at the magazine knew about this. They certainly didn’t mention it.

Strip Poker was a computer game that had been out for a long time, made by Artworx. I remember seeing (tasteful) ads for it in my earliest issues of Compute!, going back to 1983. The earliest versions of it ran on 8-bit computers: Atari, Commodore, and Apple, using artistically rendered graphics (no digitizing). Later versions of it were made for the Atari ST, and Amiga, and eventually the PC. Amazingly, Artworx is still hanging on, making the same product!

Honorable mentions

There were other games Compute! published that I enjoyed, but I put them in a bit of a lesser category. It’s subjective:

Outpost, by Tim Parker, June 1982, p. 30 – A character-based (as in, ASCII), turn-based game where you had to defend yourself against computer-controlled attacking ships. It reminds me of the old Star Trek game, but you were stationary.

Goldrush July 1982 – You mined for gold with explosives. You had to watch out for cave-ins, and try not to get trapped with no dynamite left.

Ski! (Slalom), by George Leotti and Charles Brannon, February 1983, p. 76 – You skied down a mountain, went through gates, avoided obstacles, and picked up points.

The Witching Hour, by Brian Flynn, October 1985, p. 42 – It was published for Halloween. It was witches vs. ghosts, kind of like checkers.

Switchbox, by Compute! Assistant Editor Todd Heimark, March 1986, p. 34 – Kind of like Pachinko, but since it was in BASIC, much slower.

Laser Strike, by Barbara Schulak, December 1986, p. 44 – A clone of Battleship.

Chain Reaction, by Mark Tuttle, January 1987 – A unique turn-based game. You played on a board with “explosives.” It really functioned more like a combination of a nuclear chain reaction and Reversi. You played against an opponent. Each space on the board had a different “critical mass” for explosives. If your space exploded, it would shoot explosives of your color into the adjoining spaces, changing the color of all explosives in that space to your color, and adding the new explosive to the ones already there. This could set off–you guessed it–a chain reaction. You tried to reach “critical mass” with your explosives in just the right places at just the right time to gradually change all of your opponent’s colored explosives to your color. It was an easy game to play. You literally could set up chain reactions that would go on for about a minute. But then, this was mostly due to the slowness of BASIC.

There’s a site dedicated to Compute! articles. They’ve gotten permission to publish many of them on the web. There are many they haven’t obtained permission for, and so you see them mentioned, but no links to articles. When Compute! went under, all copyrights reverted to the original authors. So anyone wanting to republish articles legally has had to try and track down the authors and get their permission. A real pain in the keester. 😦

Edit 5-11-2012: Ian Matthews has digitized every issue of Compute! into searchable PDF format. Now you can read full issues, all the articles, see all the programs with full source code, and see what was selling and what it was selling for (yikes!) Now (hopefully) Compute! will live on in posterity where everyone who wants to see it can find it. He broke up many of the issues into sections, as the PDFs get pretty large to download.

I updated all of my Compute! links in this post, so that you can read more about them. look at the articles for yourself. This way you can finally see these issues as I saw them.

Matthews has requested that if people want to view more than a few issues on his site that they purchase a DVD of the complete set, as downloading a bunch of issues will cost him in bandwidth. So I’m not linking directly to his PDFs.

I envisioned doing something like this about 15 years ago, but felt squeamish about it 1) because of the copyright issue, and 2) when I learned that the best way to do it would destroy every issue I had. I’d have to separate every single page from its binding (using something like a razor blade) and then run it through the scanner. I’m glad someone else had the courage to do it right. 🙂 Here’s a video Ian made, showing every single Compute! cover, from the first to the last issue.

Conclusion

The time while Compute! lived was a happy time. I still have fond memories of it. I guess today young, aspiring programmers are getting the education I got by working on open source software. Compute! was the open source software of the time, as far as I was concerned. It wasn’t the only magazine that did this, but in my opinion it was the best. There were other magazines publishing type-ins for Atari computers, like Antic, and A.N.A.L.O.G. I switched to Antic when Compute! stopped publishing them. I can’t remember, but I may have kept on with them until they went under. I found out about Current Notes in college, and came to really like it. No type-ins, but it had interesting articles on all things Atari.

Compute! was a key part of my education as a programmer. The thing I loved about them was they always had a focus on making computing fun. Sure it was frustrating to spend hours typing in a program, and debugging it, but when you were done, you got what you wanted, and you learned some things. Secondly, they had the notion that everyone could share in the experience. They hardly ever published a program for just one computer. They made sure that most of their subscribers could enjoy a program even if the original author only wrote it for one platform. That was mighty generous of them. I imagine it was hard work.

In closing I’d like to thank the editors of Compute!: Robert Lock, Richard Mansfield, Charles Brannon, Tom R. Halfhill, and anyone else I’ve forgotten. You helped make me the programmer I am today, and my teen years something special.

—Mark Miller, https://tekkie.wordpress.com